- Department

Overview

Contact us

- Research

Research in brief

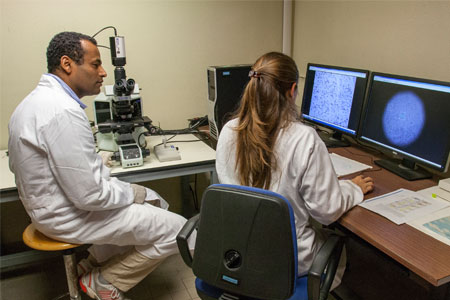

Research activities

Research Facilities

- Teaching

PhD programmes and postgraduate training

Teaching services

- Community Engagement

Information for community

Contact us

- People

- contacts

-